Migrating from Standard Switch to NVDS with NSX-T 2.4

So, I have started developing my knowledge around NSX-T. No formal training, but I do have an environment, in which I can try out things. So I set up a basic NSX-T environment, consisting of one NSX Manager/Controller (starting with 2.4 this is a combined function, which can be clustered), and I created all the necessary stuff to make routing and switching available to virtual machines, by use of overlay networking.

One other thing I wanted to try, is to migrate host-networking from the standard switches to the newly developed NVDS. If you want more information about NVDS’s, I can highly recommended following this VMworld 2018 session: N-VDS: The next generation switch architecture with VMware NSX Data Center (NET1742BE)

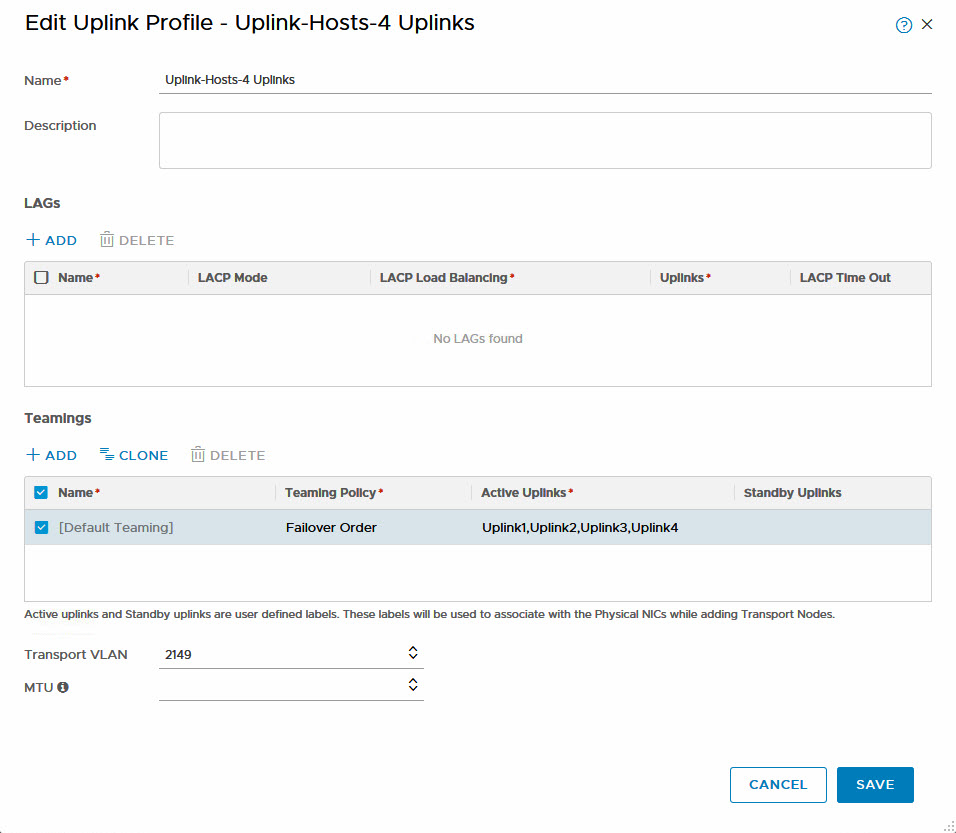

I will describe the environment in a later blog, but for now it is important to know that I have four (nested) hosts, with four “physical” nics per host. All nics are connected and configured equally, from a VLAN perspective (multiple VLAN’s live on the physical layer). I have connected the hosts to NSX-T, using an Uplink Profile, consisting of four uplinks, to connect physical adapters to, and I use a transport VLAN of 2149 for all Overlay based Logical Switches:

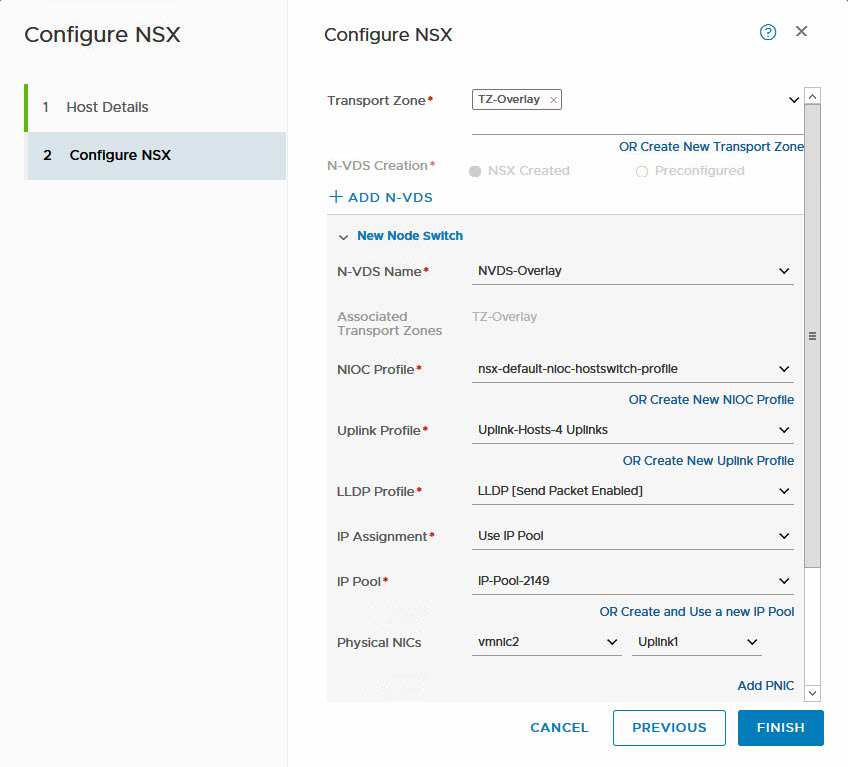

As a starting point, only one of the uplinks is connected:

As a starting point, only one of the uplinks is connected:

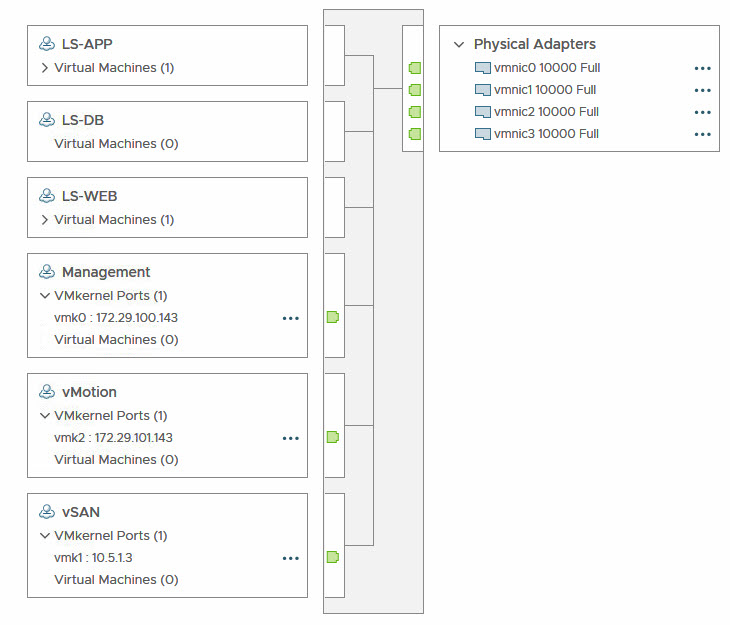

All hosts have their Management, vMotion and vSAN interfaces still living in Standard Switches, connected to three “physical” interfaces and those vmkernel-adapters ánd physical interfaces need to be migrated to an NVDS.

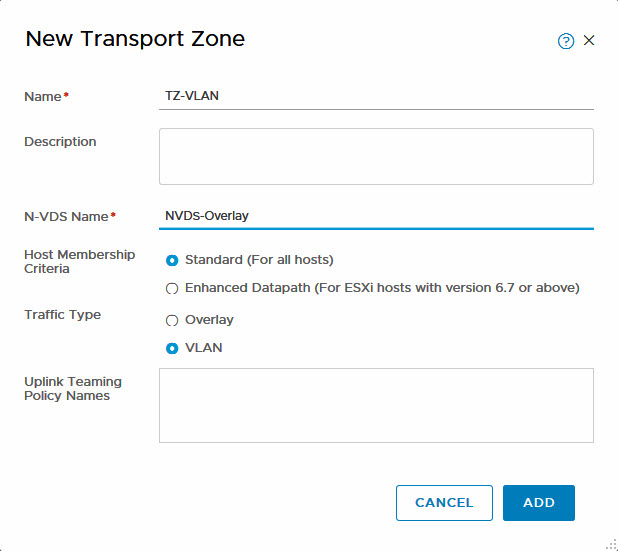

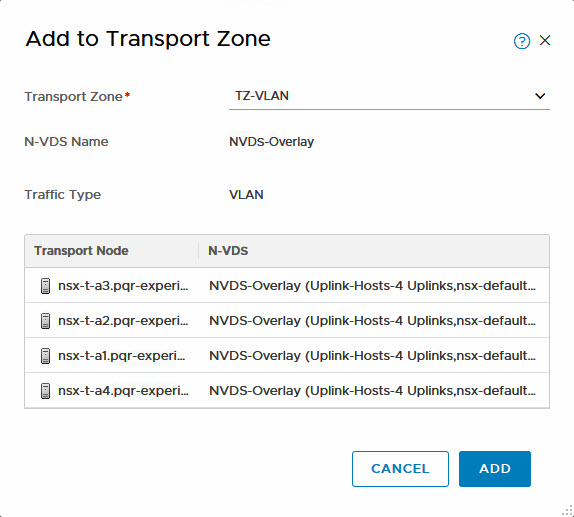

So first of all, I created a new Transport Zone, for VLAN-based logical switches (I already have the TZ for the Overlay (and a badly named NVDS, for that matter ;)).

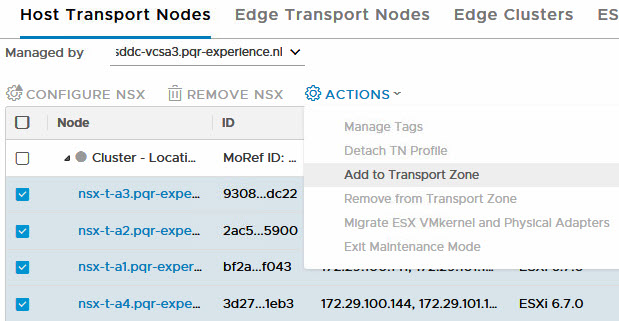

This TZ can then be connected to the Transport Nodes (hosts), while still using the existing physical connection with which the hosts are connected to the NVDS. This is possible because I am using the same NVDS for Overlay as well as for VLAN based Logical Switches (or Segments as they are called in 2.4):

And then:

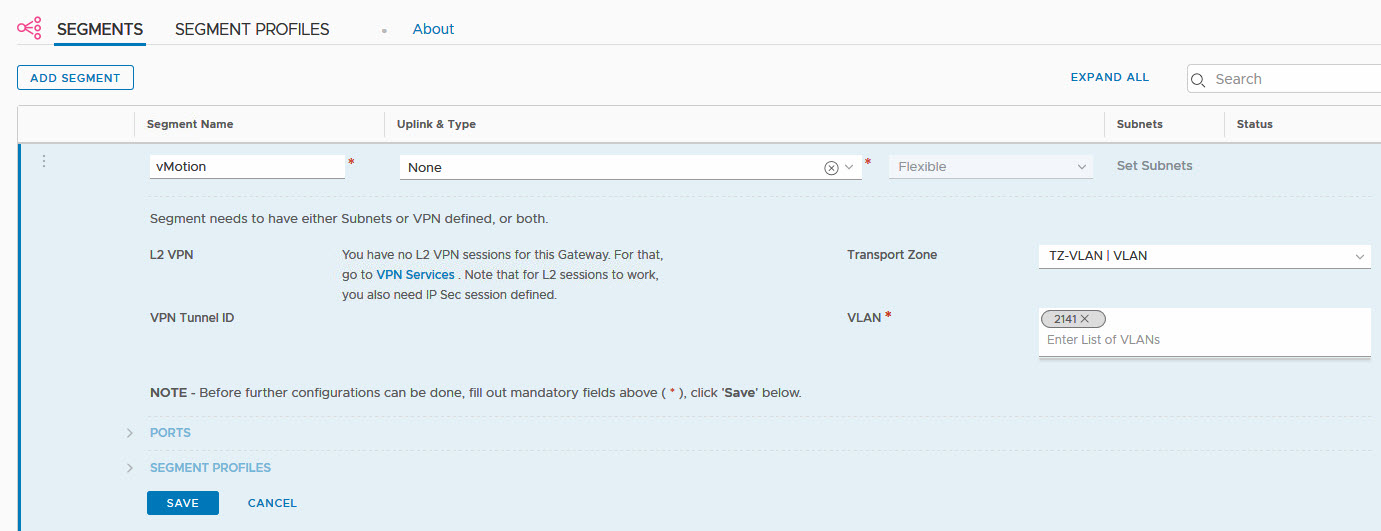

After this, it becomes possible to create some VLAN-based Logical Switches/Segments, which can be used to migrate the vmkernel adapters to. Let’s start of with the vMotion function, because it is the least critical in the functionality of the hosts (if something went wrong, I would rather have it go wrong on the vMotion interface than on the management of vSAN interface ;)).

A fairly easy method of creating a new Logical Switch. If I want to, I could select some different settings in the profiles section, but for now, this will suffice. I created logical switches for Management and vSAN in the same manner.

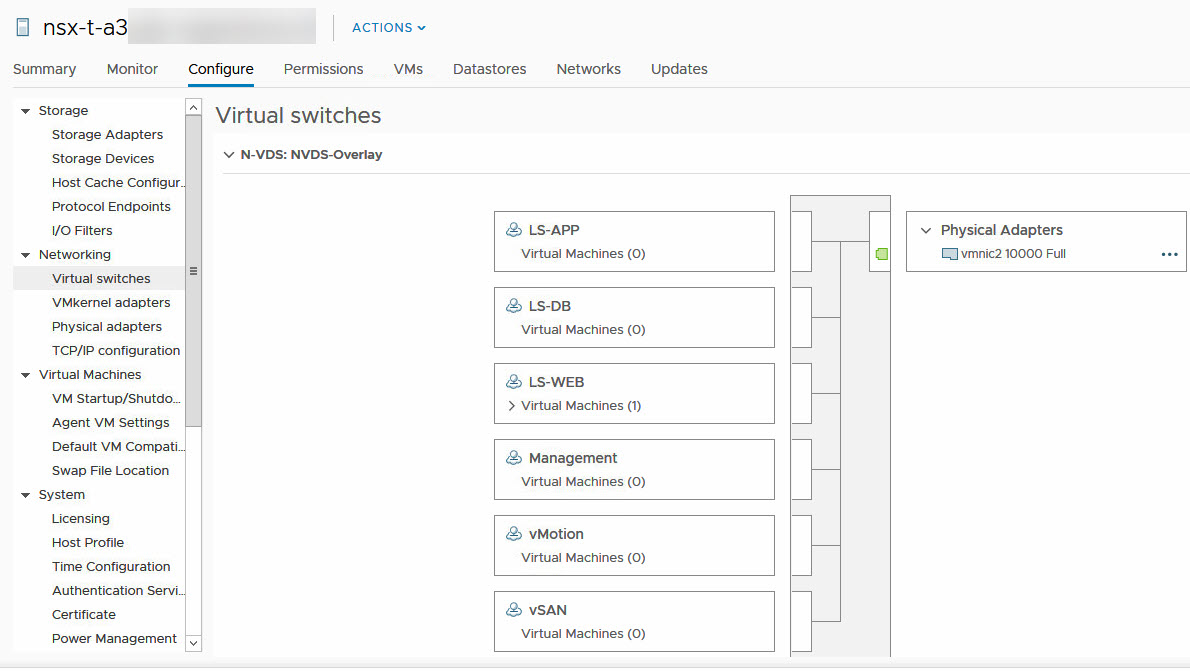

This also results in the creation of these Logical Switches in the ESXi host:

As you can see, no differences between VLAN backed Logical Switches and Overlay-based Logical Switches (the ones starting with LS-).

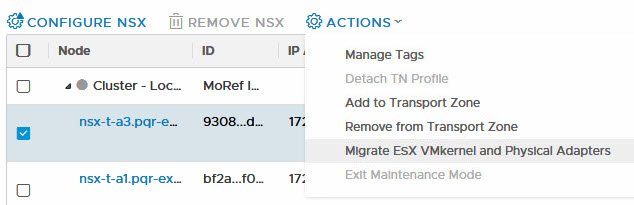

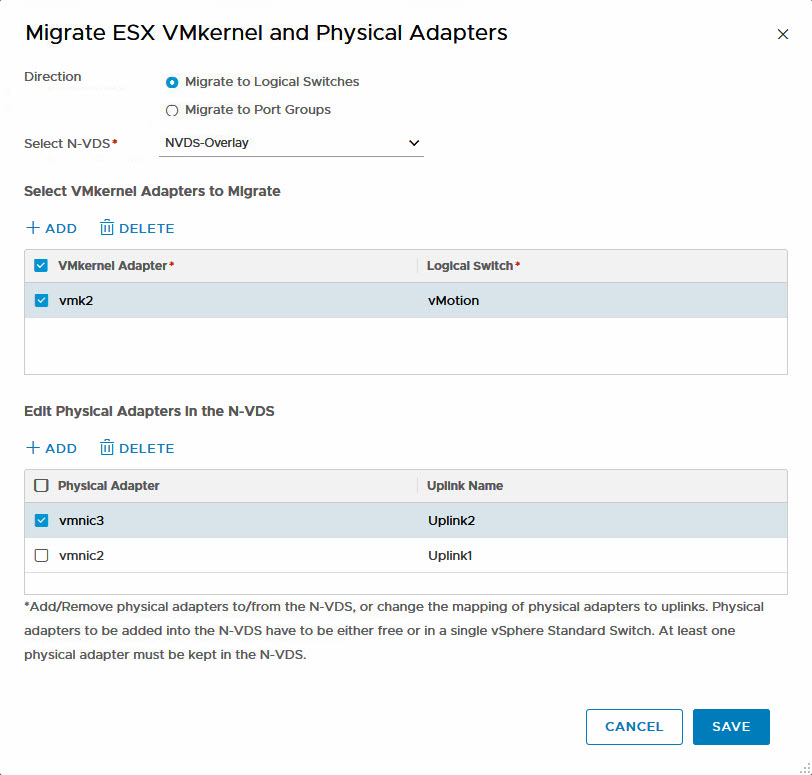

So when all this is done, it is time to really migrate the vmkernel and physical adapters to the NVDS. As far as I know, this is an activity, per host, so I selected the first host (nsx-t-a3) and selected “Migrate ESX VMkernel and Physical Adapters:

Then select the correct vmkernel adapters and connect the correct physical adapters to the correct uplinks. Since all physical adapters are connected in the same way to the physical network, there is no need to really differentiate in the way the uplinks are used and connected to phyiscal adapters, but I can imagine that in a real life situation, this might be important. If it is necessary to differentiate, this can be done on the Logical Switch.

And when you click Save, you get a nice Warning:

In my first attempt I just migrated one of the vmkernel adapters and one of the physical adapters, but it is possible to migrate more vmkernel adapters and more physical adapters at the same time.

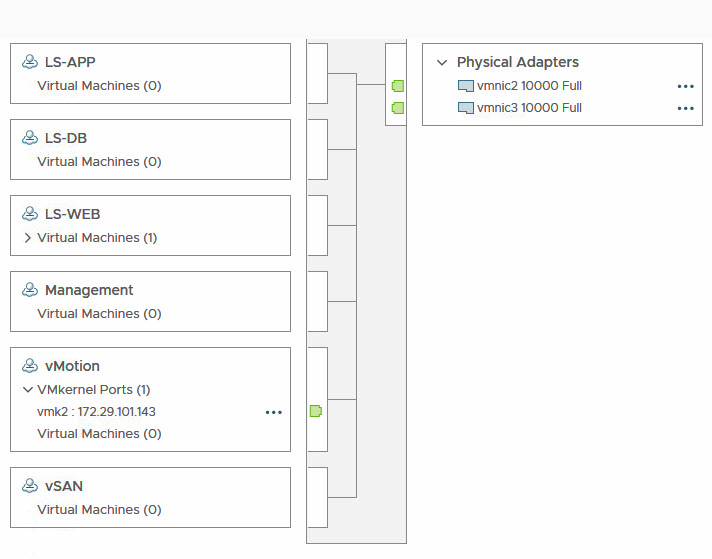

After completion of all this, I can see that the vMotion interfaces was moved to the NVDS ánd that the NVDS is now connected to two physical interfaces:

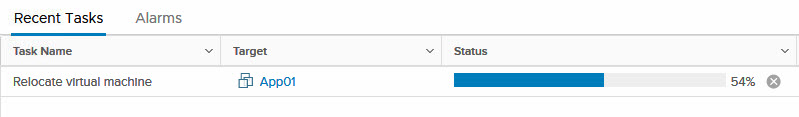

Now, as the proof of the pudding is in the eating, I will migrate a virtual machine to this host:

And as we all know, after 20% the real copying takes place. So feeling confident, I migrated the rest of the vmkernel ports and physical adapters, which led to:

After migrating all the other hosts, in the same way (but in one step), all standard switches can be removed and NVDS is the only way the hosts are connected to the network.

You do get an error, about lost connectivity though:

![]()

And one missed ping.

Edit: Added information: This will also work for vmkernel adapters connected to distributed switches.

6 thoughts on “Migrating from Standard Switch to NVDS with NSX-T 2.4”

Hi there! I read your article about 50 times but have no clue how did you manage to have Overlay and VLAN on the same vmnic…

In my case I can attach vmnic either to overlay TZ or VLAN TZ

I cannot understand what I’m missing, appreciate if you share the magic trick 🙂

p.s. I’m using NSX-T 2.4 and vSphere 6.5 (maybe this is the cause)

When creating the TZ, you have to specify an N-VDS. If you use the same N-VDS, for both TZ’s you should be okay.

Then, when connecting nodes to the TZ’s, you select that specific N-VDS and connect it to both TZ’s. It will detect that both TZ’s will be available through that one N-VDS.

Then you can connect a single nic for one of the uplinks to that N-VDS, and both Overlay and VLAN-TZ’s will be using the same nic.

You cán use multiple VLAN-TZ’s connected to the same N-VDS, but only one Overlay-TZ.

Well… I’m an idiot 🙂 I just figured out and then read your reply. I think my brain was too tired to find it yesterday. Thank you for this article, it’s really helpful!!