Deploying a (nested) Workload Domain with VCF

We saw the history of VCF (Building a VMware Cloud with VCF (a short history)), deployment of the Management Domain (Deploying VMware http://vmwa.re/nestedesxiCloud Foundation – Management Domain) and the Management of VCF (Managing VMware Cloud Foundation – First Look), so now it is time to deploy the first Workload Domain (WLD). Since we are running a nested environment, we need to do some preparation before we can proceed.

Preparation

First things first. We need additional hosts. Since we are running in a nested environment, we can basically create as many as we want, and since we are also not going to run a whole lot of activity in the WLD, we can keep the hosts small, so we can fit quite a lot of them on our environment.

With the first WLD, I would like to be able to use All-Flash vSAN including Erasure Coding, with an FTT of two. This means a minimum of 6 nodes, to cater to these needs. Again, I am using the ova available on http://vmwa.re/nestedesxi

This time I have created 6 nodes. Changes to the default deployment of the nodes are:

- 1 CPU:4 cores

- 16 GB memory

- Expanded the first disk to 4 GB in order to be able to manual patch ESXi

- Expanded the second disk to 4 GB as a vSAN cache disk

- Expanded the third disk to 60 GB as a vSAN cache disk

- Change start-up setting for the NTP service to “start and stop with host”

- Update to the correct build for VCF 3.7.1

This time I created a base image, in which I performed all necessary actions and then cloned it five times. William Lam also described the steps necessary to be able to clone the virtual machine. This is related to 6.5, but it works for 6.7 as well. Post can be found here and again, I am very much indebted to William for his awesome work for the community.

After cloning the VM, it is still necessary to change the fqdn and the IP-address of the VM.

After the cloning activity and the necessary changes have been made, I have six hosts, available for the first WLD.

Commission Hosts

Before we can create our first WLD, we need to commission the hosts. This is a process in which the hosts are checked for compliance with VCF and are brought under control of the SDDC Manager.

To be able to commission hosts, we do need a Network Profile. In this network profile, the definition of networks is described. This goes for vMotion and (if applicable) vSAN networks. Normally we would create separate networks for each WLD, but my VLANs are limited, so I chose to use the existing network profile, which was created with the Management Domain (MD).

The existing network profile can be found here:

The pools have been extended so that new hosts can be added to it.

Within the SDDC Manager Dashboard, we get the option to commission hosts. We can choose to commission all hosts in one go:

And when we start commissioning hosts, we get the checklist to make sure we thought of everything (which we have ;)):

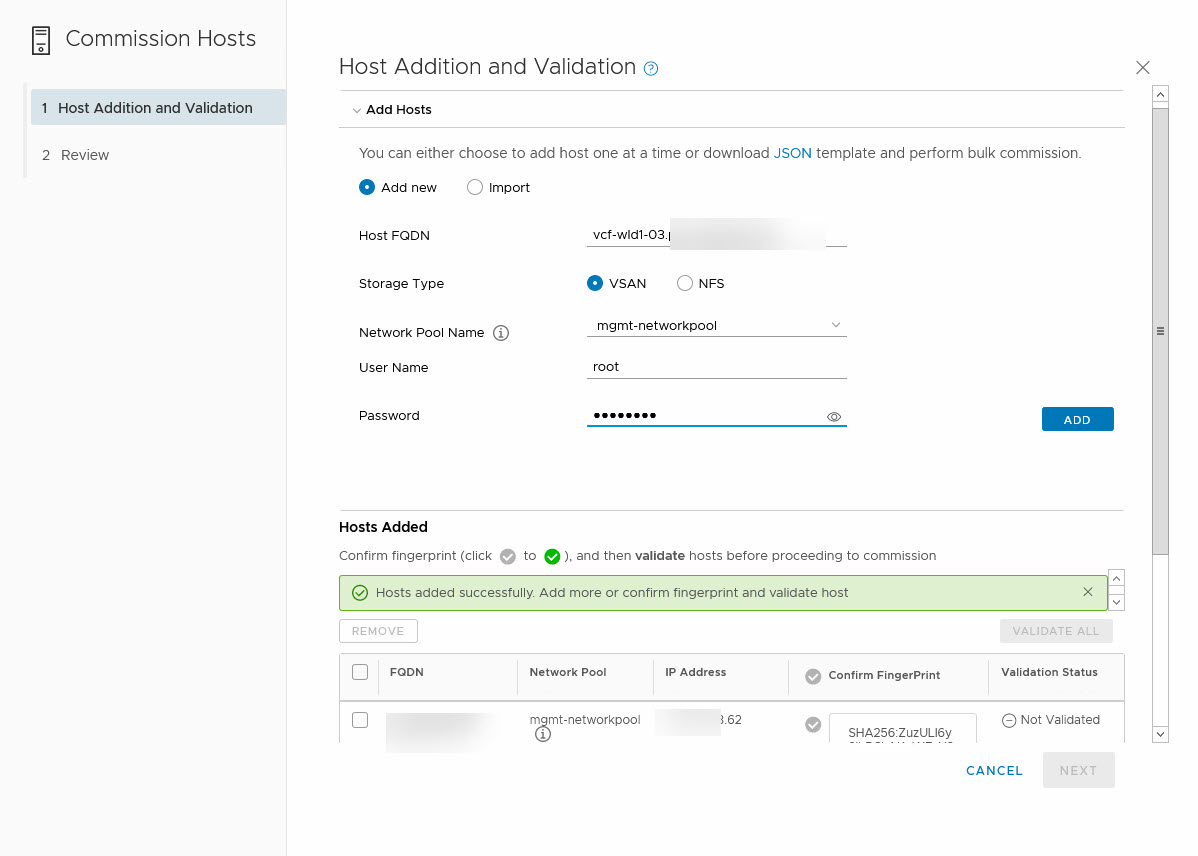

When we check all boxes, we can proceed to the next page, where we can list the hosts we are adding. Here we can add each host individually or create an import file. In our case, I chose to add them manually:

When all hosts are added to the list, we can validate all FingerPrints (by clicking on the checkmark next to “Confirm FingerPrint” and then we can choose to Validate the hosts.

This will validate all hosts and makes sure that you haven’t just signed off on the checklist, without actually doing the necessary work. When the validation has completed successfully, we can go to the next step, which will add the hosts to the SDDC Managers inventory:

After we hit Next, we can review all information and start the commissioning of the host:

Commissioning a host will trigger a list of tasks that will be performed. It is possible to check the progress as well:

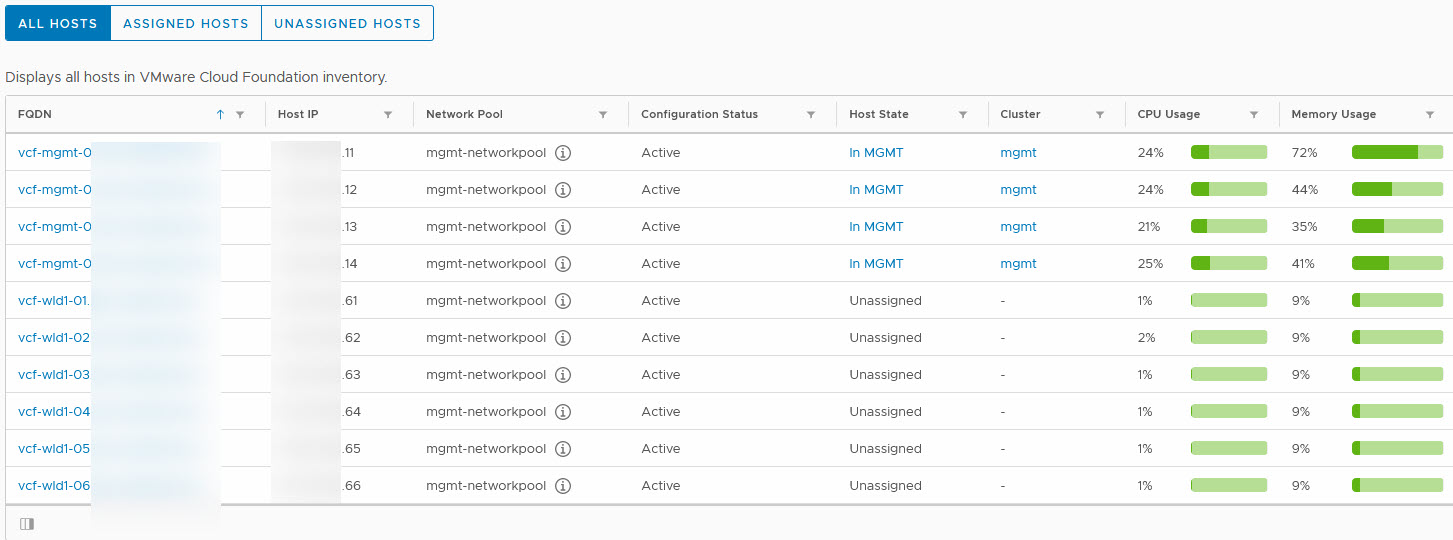

After the commissioning is done, we can see all the hosts available to us in the SDDC Manager:

Now that we have the hosts available, we can start with the creation of our first Workload Domain (WLD). Of course, we also need to make sure that all entities that we are going to deploy, is configured in DNS for forward and reverse lookup.

The following entities need to be created in our situation, where we are utilizing NSX-T):

- vCenter Server

- NSX-T VIP

- NSX-T Manager 1

- NSX-T Manager 2

- NSX-T Manager 3

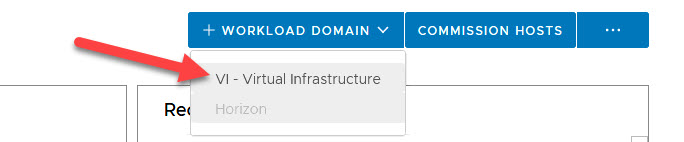

We select + Workload Domain and choose the type. In our environment, the only option is VI – Virtual Infrastructure. This is because the Horizon bundle has not been downloaded.

We need to choose the type of storage for our new WLD:

We choose vSAN and click Begin. From there we get a couple of screens that ask us a lot of information, like (not to have all screens displayed here, for some I have just listed the questions asked):

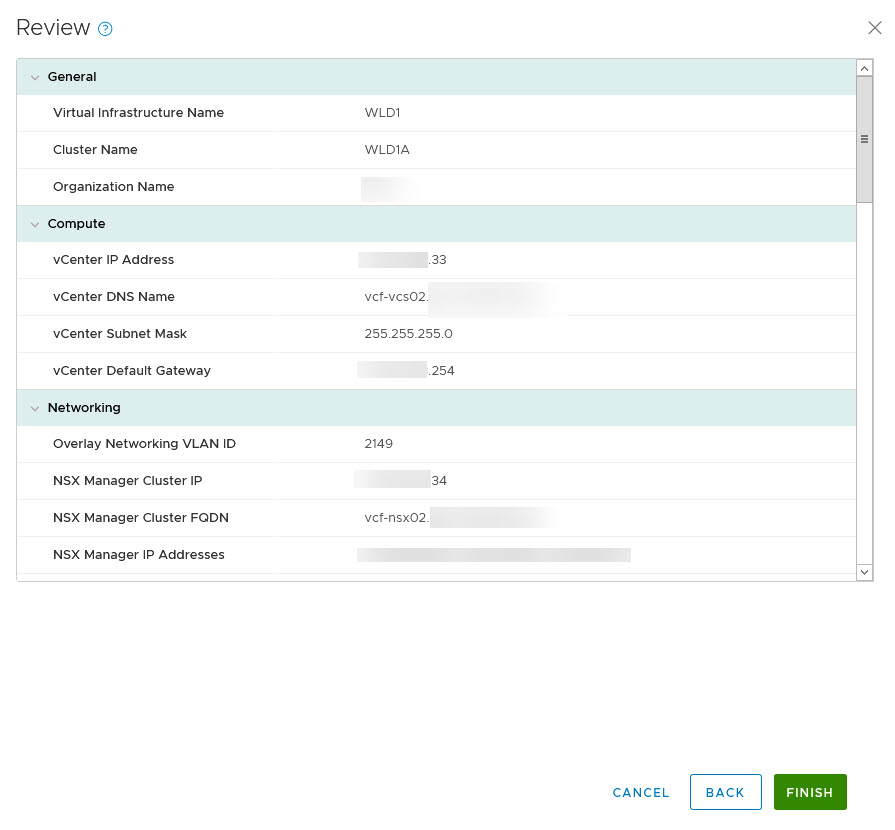

Name:

- Virtual Infrastructure Name

- Cluster Name

- Organization Name

Compute:

- vCenter IP Address

- vCenter Name

- vCenter Subnet Mask (prefilled, based on the deployment of the MD)

- vCenter Default Gateway (prefilled, based on the deployment of the MD)

- vCenter Root Password

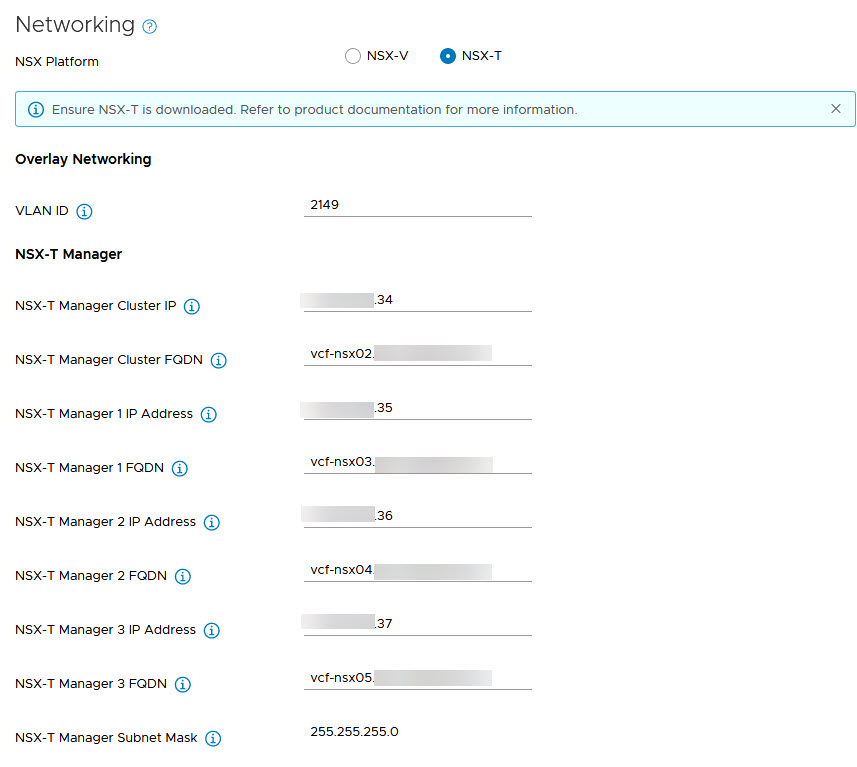

Networking

In networking, we get to choose between NSX-V or NSX-T. When choosing NSX-V, you create one environment for each WLD (because of the connection between the NSX Manager and the vCenter Server). When choosing NSX-T, you create one environment for each WLD that is configured for NSX-T

vSAN Parameters

- Failures to Tolerate (determines the number of hosts required)

Host Selection

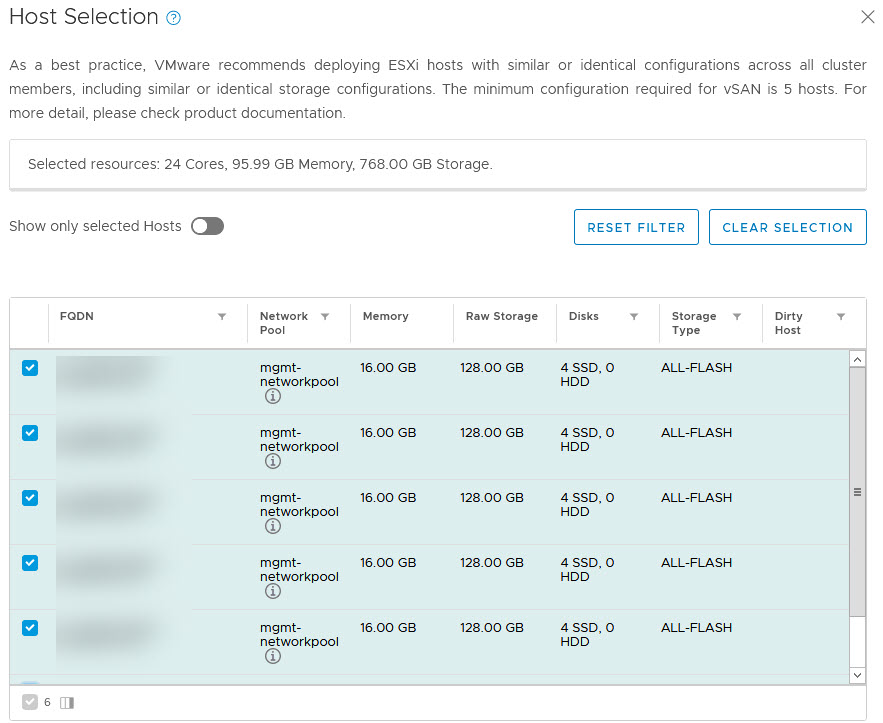

Here we get to choose which hosts will be added to our WLD. It will check how many hosts are selected, to allow for the Failures to Tolerate selected earlier. We selected 5 of our 6 commissioned hosts (so we can extend the cluster for another blog post ;)):

License

A screen to select the necessary licenses for the used products (in our case NSX-T, vSAN, and vSphere)

Object Names

In this screen, all generated names are displayed, which we take at face value.

Review

In this screen all information is shown before we can click Finish:

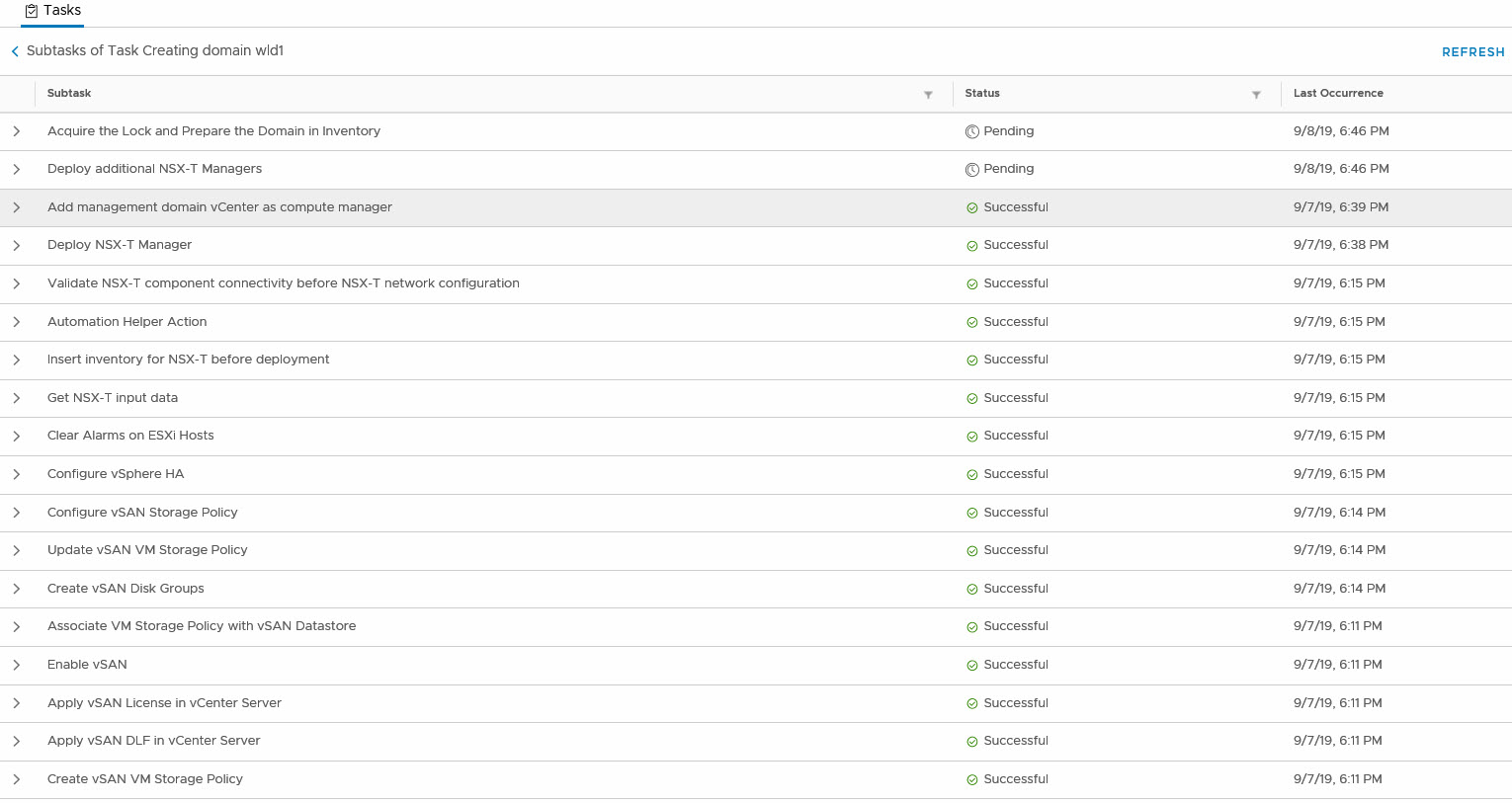

And after we click Finish, the SDDC Manager is going to create a Workload Domain and all necessary components.

Now, this is a little bit tricky, since we are running nested and with limited resources. It is important to have made sure that all newly deployed components can run. So for NSX-T, VCF will deploy 3 Managers, each with 48 GB of memory (fully reserved) and 12 vCPU’s. So the management hosts should be able to accommodate that.

But after this has all been done successfully, we have an empty, new WLD domain with a new Cluster in it (sorry, no picture yet :)).

One thought on “Deploying a (nested) Workload Domain with VCF”