VCF on VCD: iSCSI Configuration

Because my lab has seen a couple of failures, with regards to storage, I got the advice to move my Management VM’s away from the vSAN, since a distributed storage layer on top of a distributed storage layer is not the best option, from a availability perspective.

So I have been building a couple of Truenas iSCSI servers, in order to store my VM’s there and at the same time configure some replication, so I am safe from any issues with storage.

So, to start, we import the appliance, which contains TrueNAS-13.0-U4. I have added one additional NIC, in order to make it an ActiveiSCSI configuration, on two VLANs (1615 and 1815). One of the VLANs is for the first VCF environment, and the other is for the second VCF environment.

I also add 8 disks with a size of 512 GB in order to create 4 iSCSI volumes of approximately 1 TB a piece.

After booting up, this Truenas system, we set the IP address to 172.30.0.201, for management. Later we will be configuring VLAN’s for the iSCSI functionality.

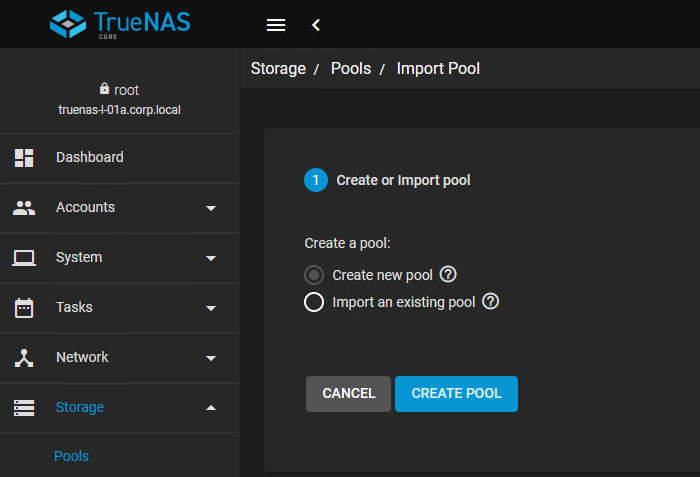

So we start with configuring the storage, by creating four Storage Pools (iscsi01-04):

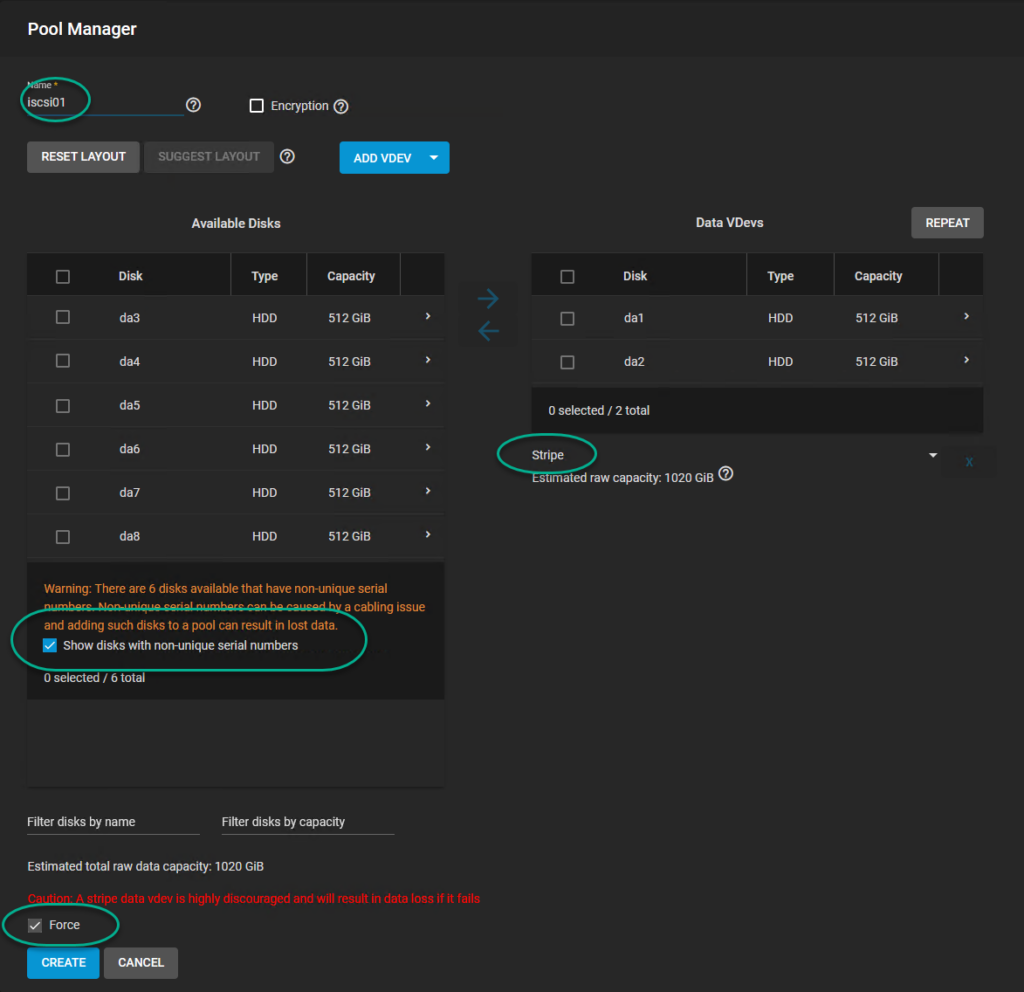

for each of the pools, I select two 512 GB disks and set it as a Stripe Set (ensuring maximum capacity):

Of course, this is not something to do in anything other than a lab environment. It gives no redundancy.

Choosing this, will ask for a couple of confirmations, to ensure you understand the risk.

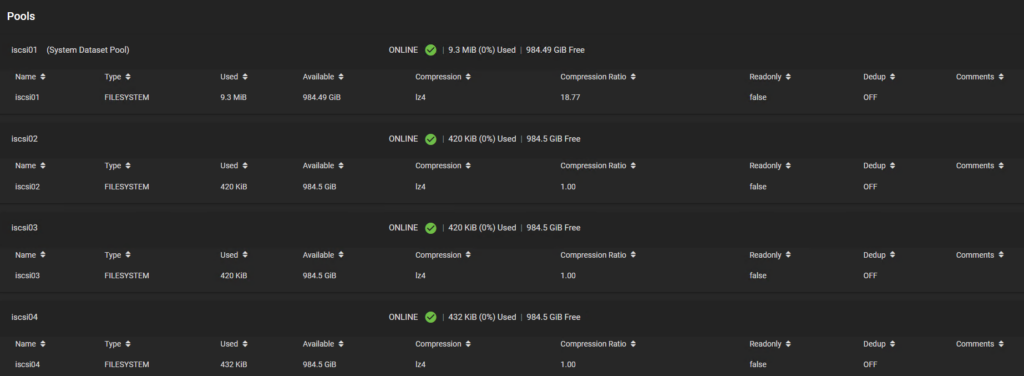

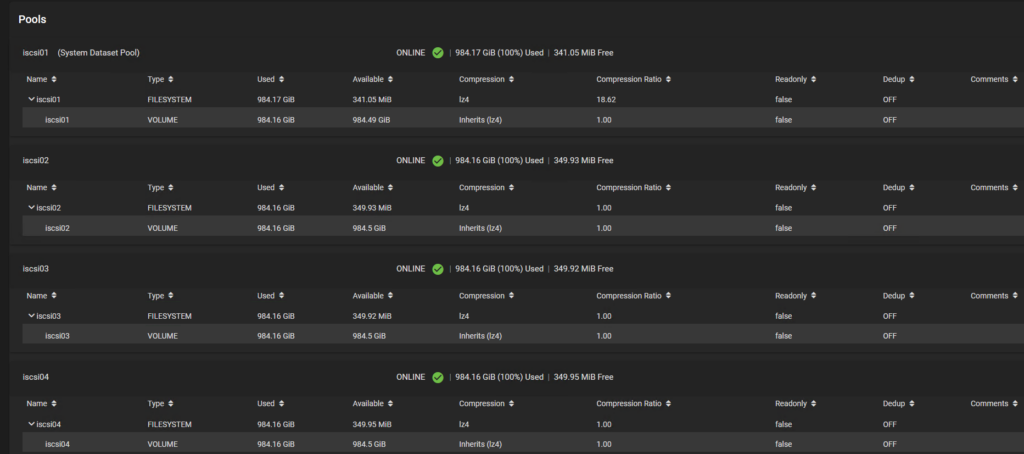

After all pools have been created:

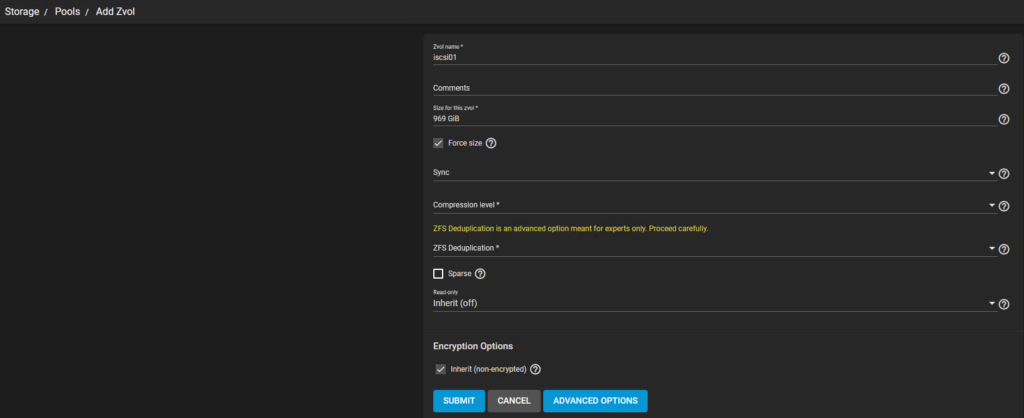

we create a zvol volume on each of the pools, with a size that equals the size of the pool:

(again forcing the size and ignoring the warning):

After all zvols have been created:

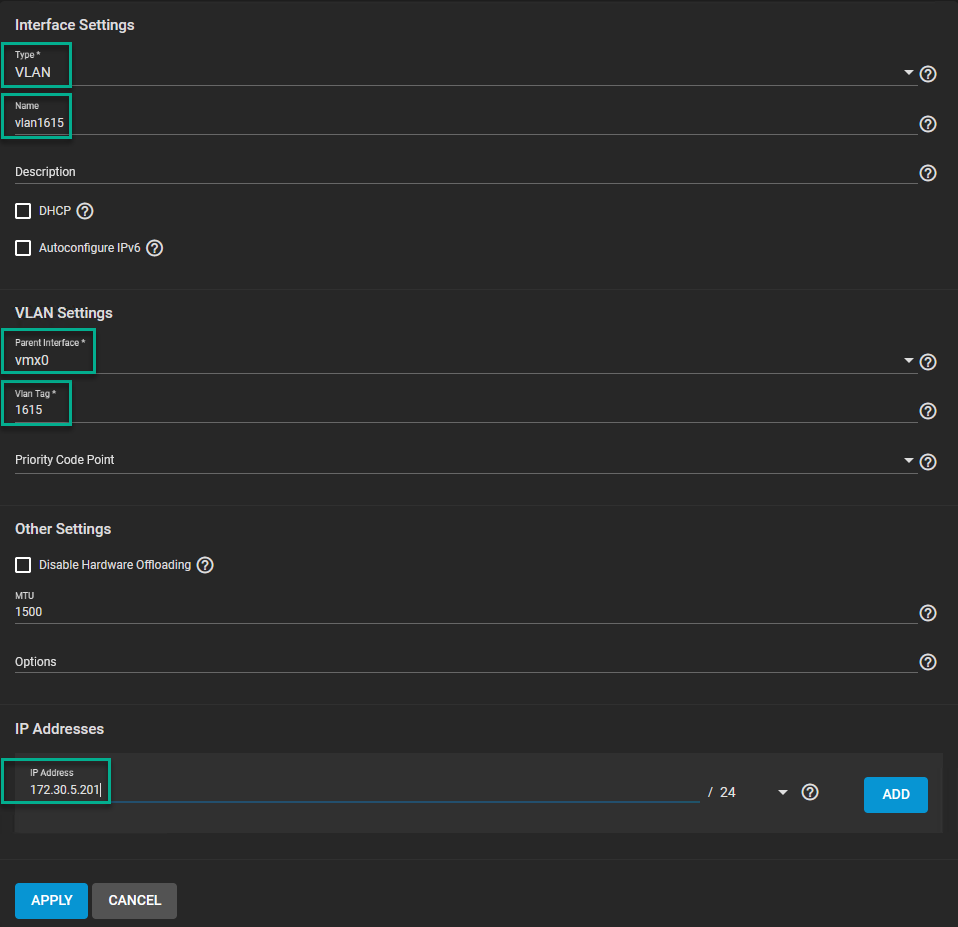

We are going to configure the network. We are creating two VLAN interfaces, each connected to its own physical interface. This allows us some separation of traffic, between the two iSCSI environments we are creating.

We go to:

Where we see our two physical interfaces, with one containing the management address. Here we will create two new VLAN interfaces, with the correct addresses:

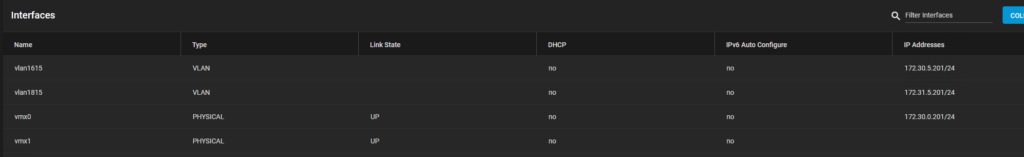

and after both have been created, we see the following:

We test and then apply the changes, and that should make the addresses available for the hosts to connect to.

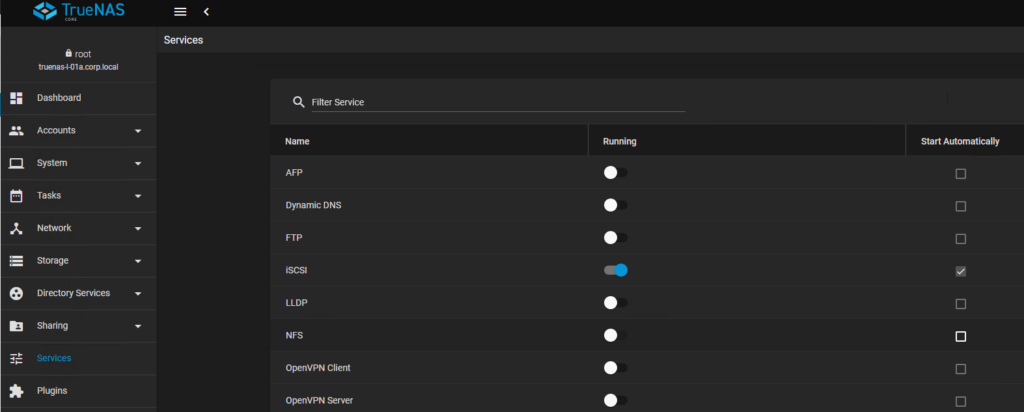

After the configuration of the network, we enable the iSCSI service (and set it to start automatically):

Now we go and configure the iSCSI part of the Truenas. For this we go to:

We will be creating:

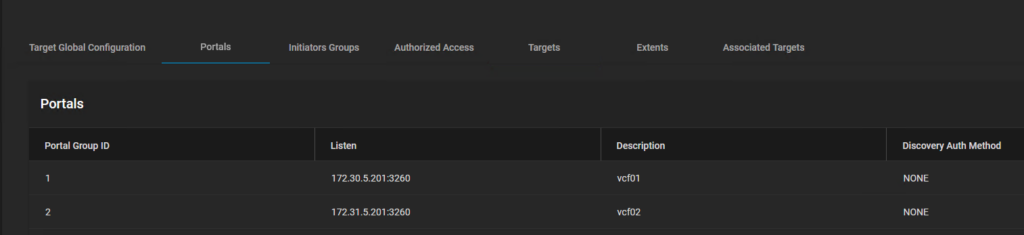

- 2 Portals

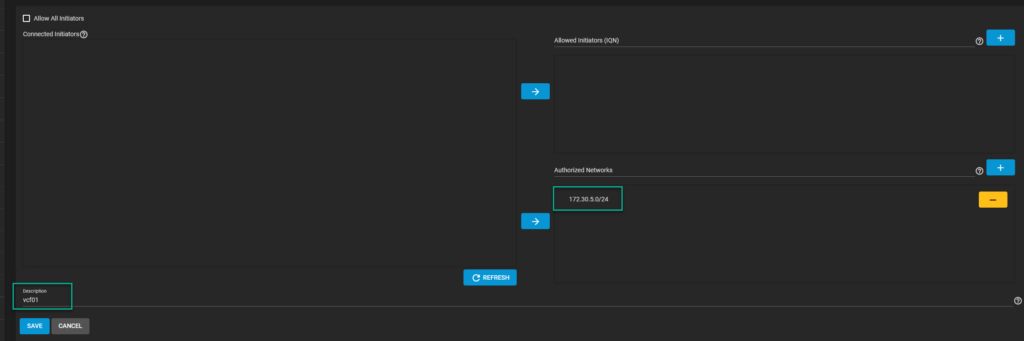

- 2 Initiator Groups

- 2 Targets

- 4 Extents

The Portal will bind to one of the IP Addresses we configured in the previous activity:

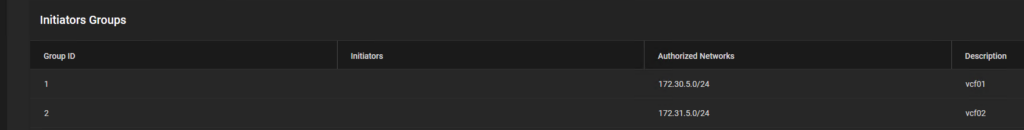

After the Portals have been created, we create initiator groups, where we define (in our case) the addresses that can access the portal groups:

leading to:

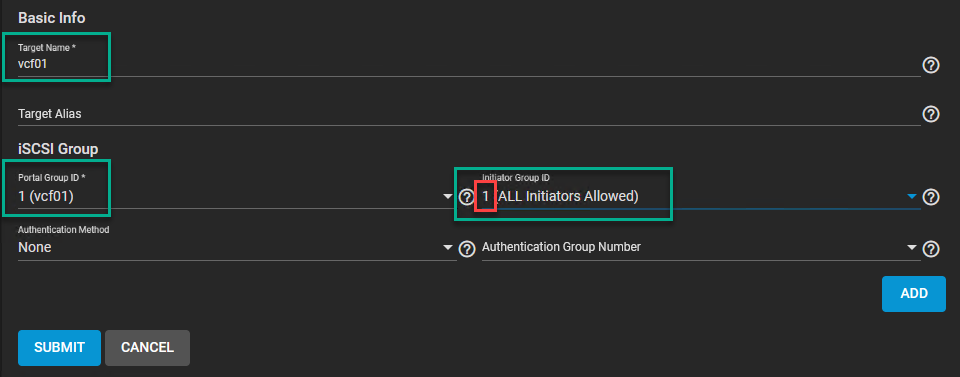

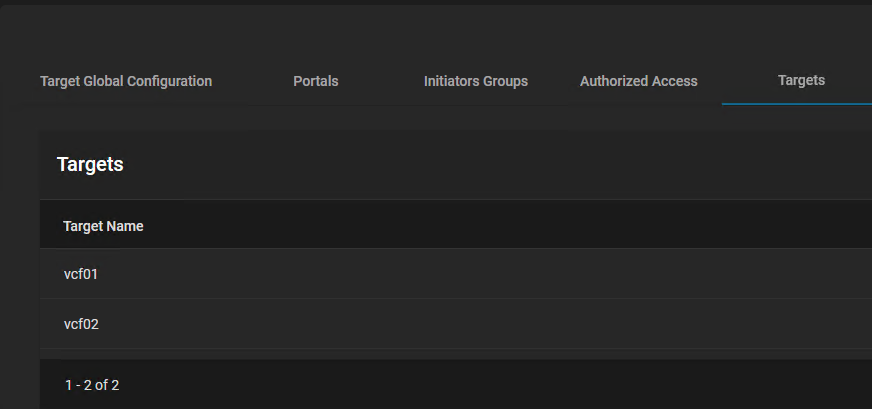

After this, we create our targets (two):

Leading to:

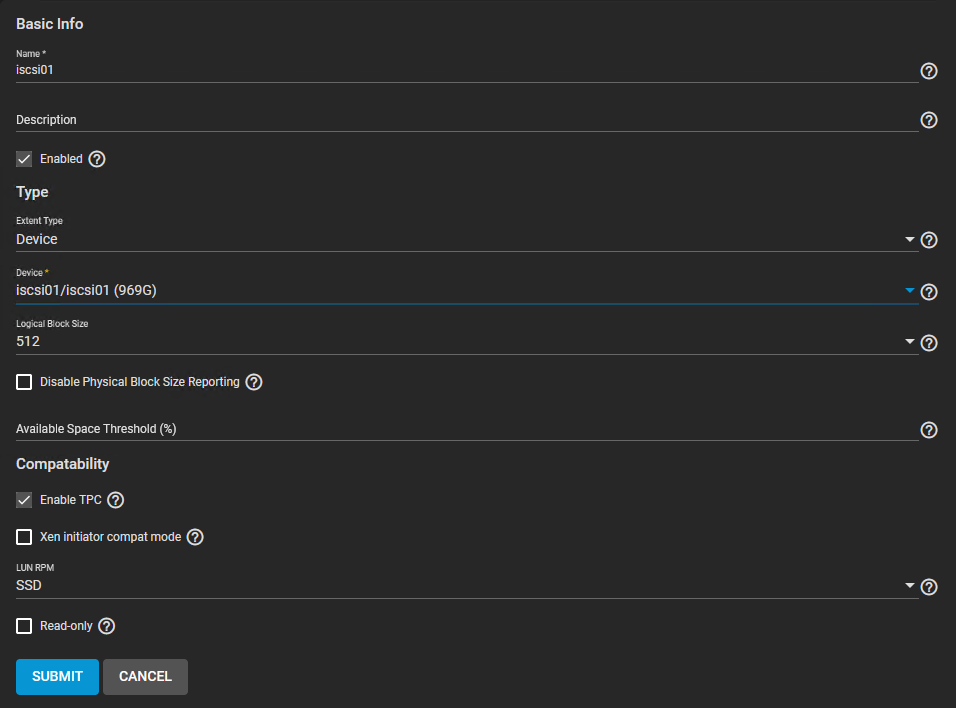

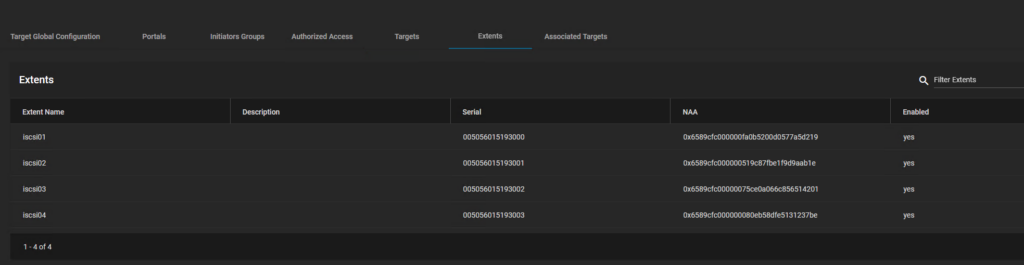

Next we create our four extents (connecting the iSCSI volumes):

leading to:

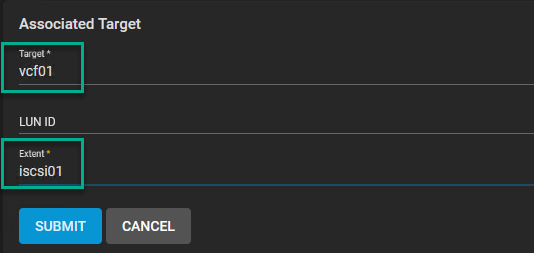

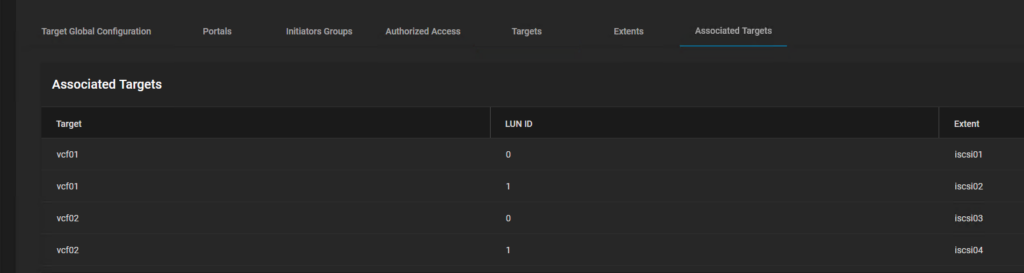

and lastly, we connect our extents to the correct target:

leading to:

After this, we can go and configure the ESXi side of things.

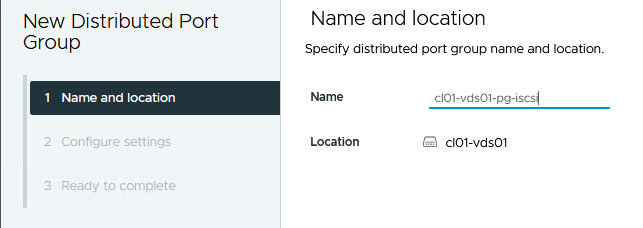

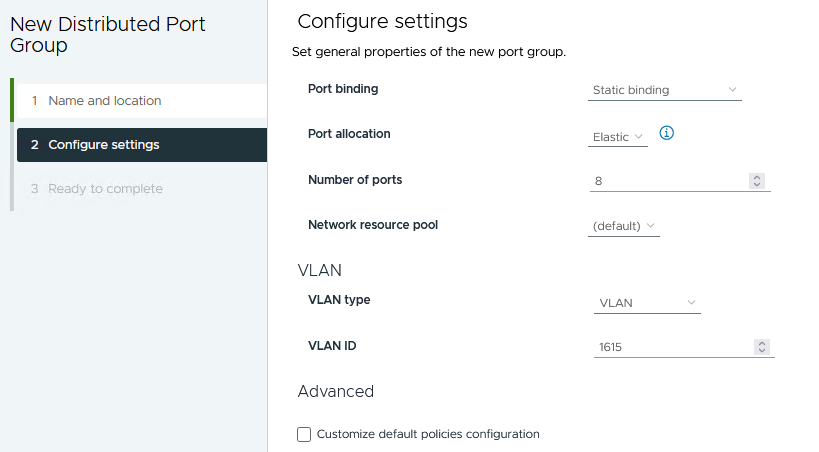

First we create a Distributed Port Group, to connect our vmx to. For this, I will be doing this on VCF01, but the same can be done on VCF02, only with a different VLAN ID:

anSelect the right VLAN ID (1615 for VCF01, 1815 for VCF02):

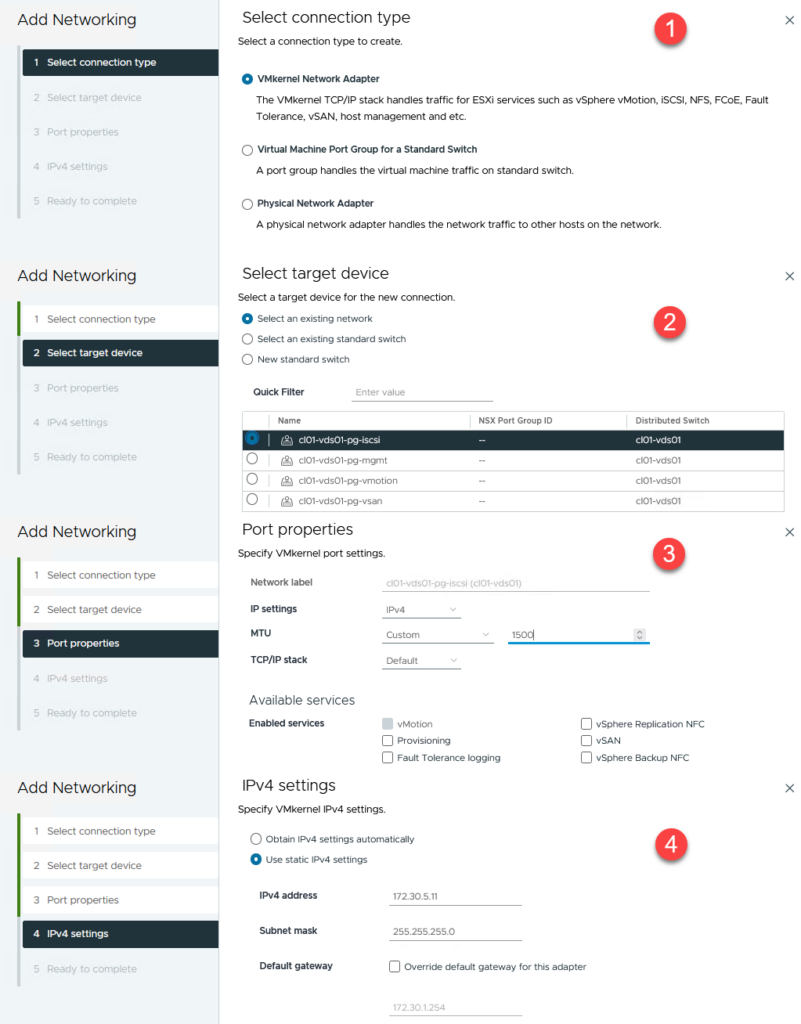

Then we create a new vmk to connect to this port group (give it an MTU of 1500 and nó gateway):

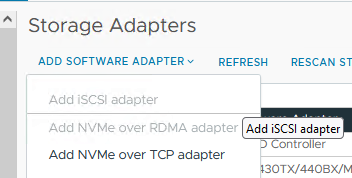

Next step is to create a new iSCSI adapter for the host (for me this is greyed out, because I already have one, but if you don’t, you can select it, it doesn’t require any further configuration while creating):

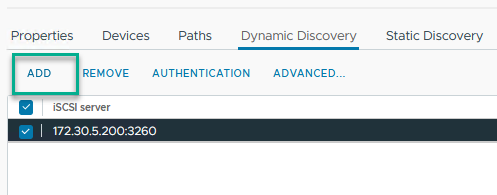

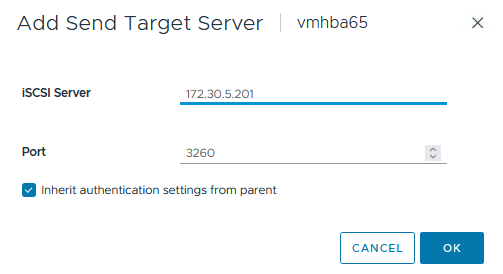

Then we add our Target IP Address as the Dynamic Discovery address:

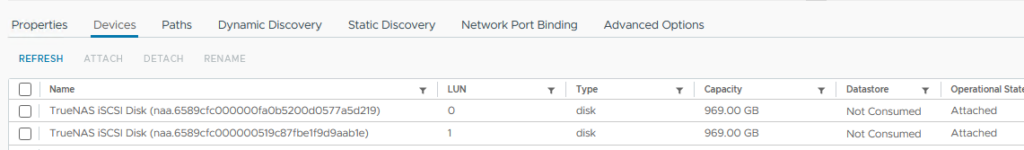

And after that, we rescan our adapter and we will see the new iscsi devices:

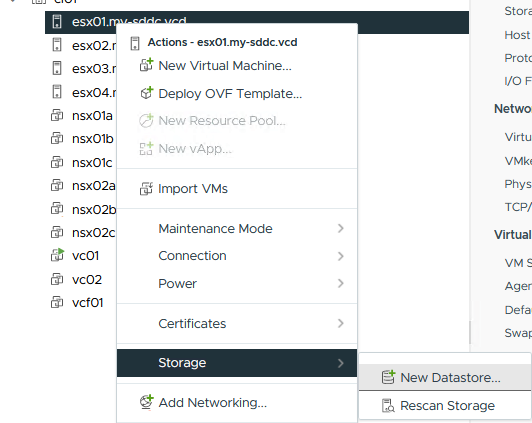

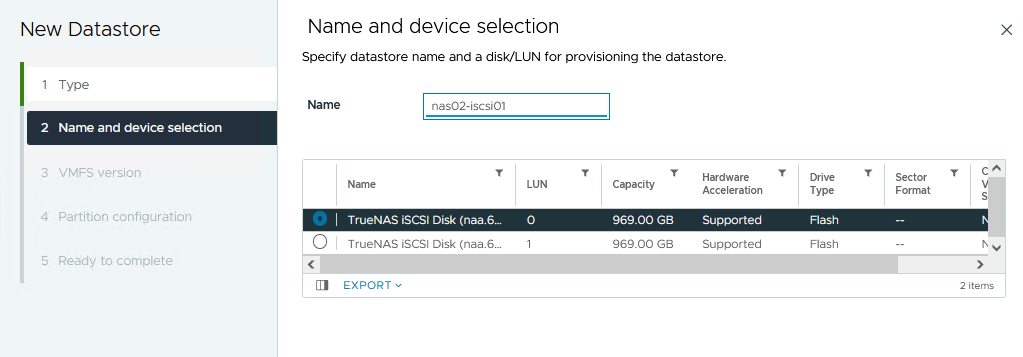

Here we create new datastores:

(the rest is kept default)

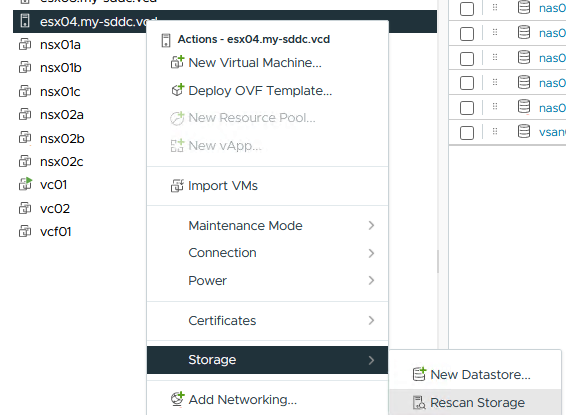

And rescan our storage on the other hosts, and the datastore becomes widely available.

With that, we are done finishing our iSCSI configuration.

One thought on “VCF on VCD: iSCSI Configuration”